Hybrid Storage

This page describes hybrid storage configurations and details about each storage medium.

Hybrid storage is available in all editions of Aerospike Database.

Overview

Aerospike stores data on the following types of media and combinations thereof:

- Dynamic Random Access Memory (DRAM).

- Non-volatile Memory extended (NVMe) Flash or Solid State Drive (SSD).

- Persistent Memory (PMem).

- Traditional spinning media.

The Hybrid Memory System contains indexes and data stored in each node, handles interaction with the physical storage, contains modules for automatically removing old data from the database, and defragments physical storage to optimize disk usage.

Analyzing Hybrid Storage for Your Needs

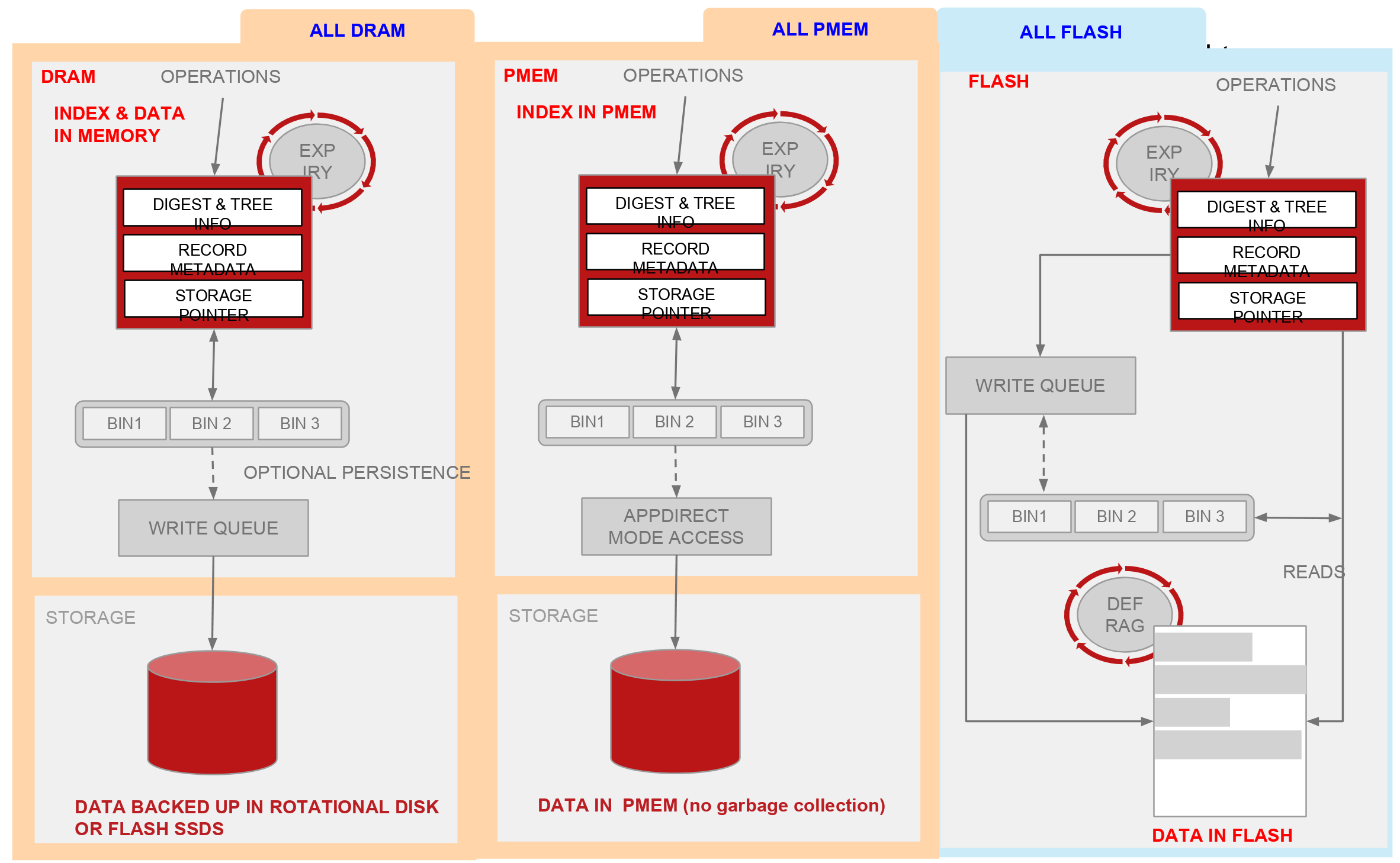

The diagram below shows possible configurations of hybrid storage engines.

Although storing both indexes and data in PMem might seem an obvious choice, their advantages differ somewhat. It is better to match their characteristics (along with NVMe Flash) to the data model of client application. The full matrix of possibilities for placing the primary index and data is shown in the table below. Not all possibilities make sense: the ones that match some use cases are highlighted in blue and are elaborated upon below.

This table is a high-level comparison to quickly highlight differences among the hybrid storage options.

| Data Storage | ||||

| NVMe Flash | DRAM | PMem | ||

| Primary index storage | NVMe Flash | All NVMe Flash: Ultra-large records sets. | Disallowed. | Disallowed. |

| DRAM | Hybrid: best price-performance. | High performance. No persistence. | Not common. | |

| PMem | Hybrid: Fast restart after reboot. Very large data sets. | Not recommended. | All PMem: Fast restart after reboot, with high performance. | |

Starting from the lower right, there are few downsides to using an all-PMem configuration. Performance is on par with DRAM, but at a lower cost per bit, and without giving up either persistence or fast recovery from reboots: in minutes rather than hours. The higher performance can be put to use in many ways, not limited to the following:

- More transactions per node: fewer upgrades as traffic grows.

- More computation per transaction, such as richer models for advertising technology or fraud detection.

Another possibility is enabling Strong Consistency (SC) mode or durable writes in applications where the need for real-time performance might have ruled this out in the past. Even when SC is not an absolute requirement, deploying it cost-effectively might be worthwhile for the operational efficiencies gained.

The ultimate limiting factor for data in PMem is the amount that the processor will support. The current generation of Xeon Scalable 2 (Cascade Lake) processors supports a maximum of 3 TiB per socket: even with multi-socket systems very large databases can’t be accommodated. There may also be economic considerations because the Xeon processors with support for large address spaces carry a premium.

For extremely large databases, dedicating all the PMem to the primary index is a better choice. It is more cost effective than DRAM, scales to a larger data set and retains the advantage for fast restarts afforded by not having to rebuild the indexes. Even at petabyte scale, performance is excellent because of optimizations Aerospike has made to block mode Flash storage.

About namespaces, records, and storage

Different namespaces can have different storage engines. For example, you can configure small, frequently accessed namespaces in DRAM and put larger namespaces in less expensive storage such as SSD.

In Aerospike:

- Record data is stored together.

- The default storage size for one row is 1 MB.

- Storage is copy-on-write.

- Free space is reclaimed during defragmentation.

- Each namespace has a fixed amount of storage, and each node must have the same namespaces on each server, which requires the same amount of storage for each namespace.

You can configure namespace data storage (storage-engine), primary index, and secondary indexes to be stored in either SSD (flash), PMem or memory. Shared memory is available for Enterprise and Standard Editions of Aerospike (EE/SE), while process memory is available for Community Edition (CE).

DRAM

Pure DRAM storage—without persistence—provides higher throughput. Although modern Flash storage is very high performance, DRAM has better performance at a much higher price point ( especially including the cost of power ).

Aerospike allocates data using JEMalloc, allows allocation into different pools. Long-term allocation, such as for the storage layer, is separately allocatable. JEMalloc has exceptionally low fragmentation properties.

Aerospike achieves high reliability by using multiple copies of DRAM. Since Aerospike automatically reshards and replicates data on failure or during cluster node management, k-safety is obtained at a high level. When a node returns online, its data automatically populates from a copy.

Aerospike uses random data distribution to keep data unavailability when several nodes are lost very small. In this example, we have in a 10-node cluster with two copies of the data. If two nodes are simultaneously lost, the amount of unavailable data before replication is approximately 2% or 1/50th of the data. With a persistent storage layer, reads always occur from a copy in DRAM. Writes occur through the data path described below.

SSD/Flash

When a write ( update or insert ) has been received from the client, a latch is taken on the row to avoid two conflicting writes on the same record for this cluster ( in the case of network partition, conflicting writes may be taken to provide availability, which are resolved later ). In some cluster states, data may also need to be read from other nodes and conflicts resolved. After write validation, in-memory representations of the record update on the master. The data to be written to a device is placed in a buffer for writing. When the write buffer is full, it is queued to disk. Write buffer size (which is the same as the maximum row size) and write throughput determine the risk of uncommitted data, and configuration parameters allow flushing of these buffers to limit potential data loss. Replicas and their in-memory indexes then update. After all in-memory copies update, the result returns to the client.

The Aerospike Defragmenter tracks the number of active records on each block on disk and reclaims blocks that fall below a minimum level of use.

PMem

Aerospike Enterprise Edition 4.8 supports storing record data in Intel® Optane™ DC Persistent Memory (PMem). Optane combines byte-addressability and access times similar to DRAM with the persistence and density of Flash NVMe storage. You can learn more about persistent memory in Intel® Optane™ Persistent Memory.

In earlier releases, Aerospike supported storing the primary index in PMem. Aerospike version 4.8 extends PMem support to the record data itself. Combined, they offer unparalleled performance while retaining both persistence and fast reboot capabilities.